It's 2 PM on a Tuesday, and your CFO just walked over to your desk with a printout of last month's AWS bill. "Why did our cloud spend go up 40%?" she asks. You stare at the invoice—EC2, EKS, NAT Gateway, data transfer, something called "Elastic Load Balancing"—and realize you have no idea which of your fifteen applications caused the spike. Was it the new marketing site? The ML pipeline someone spun up for a hackathon? The staging environment that nobody turned off?

This scenario plays out in engineering organizations every month. Kubernetes promised operational efficiency, and it delivered—but it also created a cost attribution nightmare. When everything runs as pods on shared nodes in shared clusters, the clean lines between "your app" and "my app" disappear into a blur of resource requests, limits, and cluster overhead.

The good news: Kubernetes cost allocation is a solved problem in 2026. The bad news: most organizations are solving it the hard way. This guide will walk you through what actually works, when precision matters, and when it's just expensive theater.

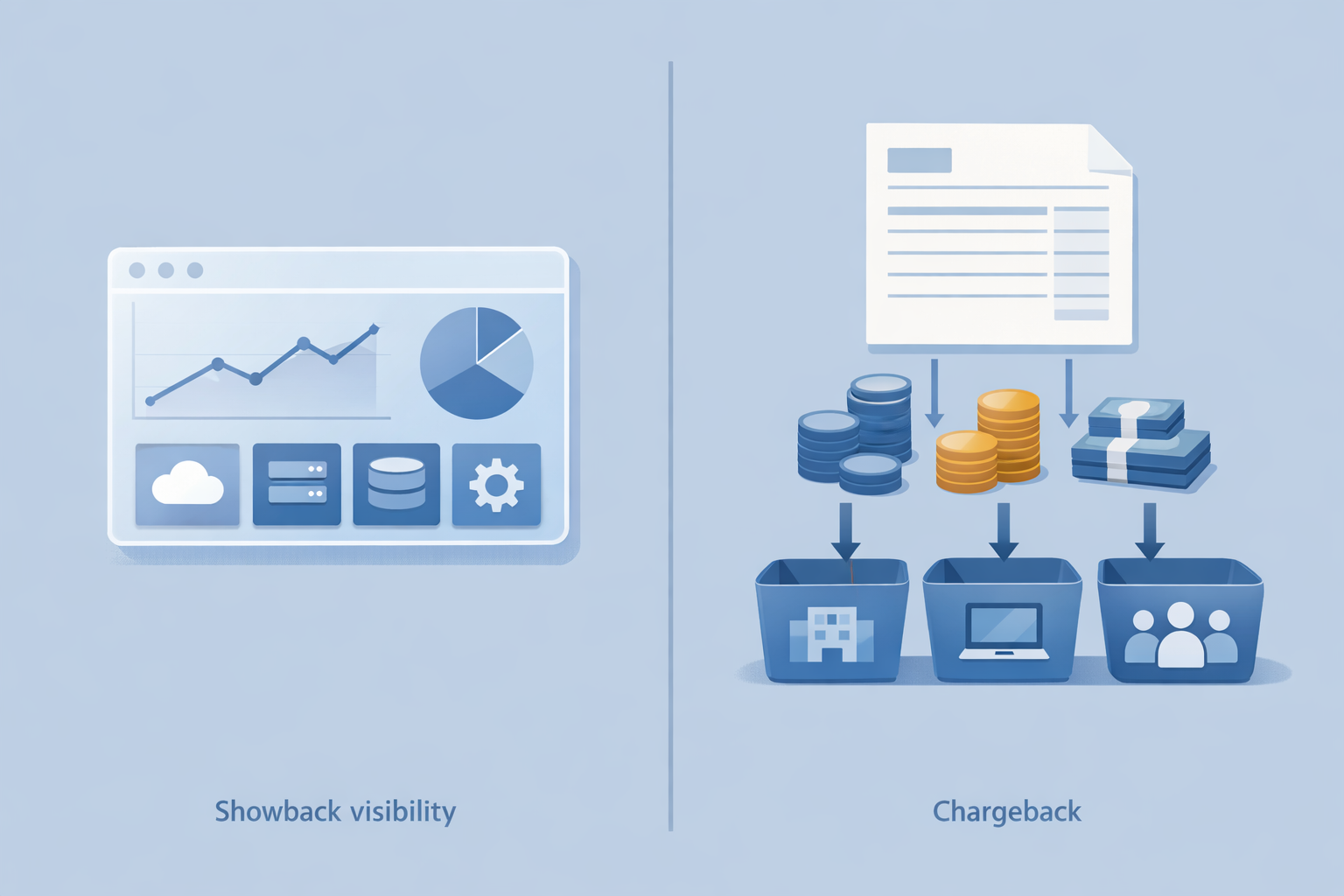

Before diving into implementation, let's clarify two terms that get thrown around interchangeably but mean very different things.

Showback is visibility without financial consequences. You're generating reports that show teams how much infrastructure their applications consume, but you're not actually billing them or affecting their budgets. It's informational. The goal is awareness and behavior change through transparency.

Chargeback is actual financial allocation. Costs flow into team budgets, departments get invoiced, or clients receive line items on their bills. This requires defensible accuracy because real money changes hands.

Here's the critical insight most organizations miss: start with showback. Chargeback sounds more mature, but implementing it before you have accurate attribution creates organizational friction without delivering value. Teams will dispute charges they don't understand, finance will lose trust in the numbers, and you'll spend more time defending methodology than optimizing costs.

For agencies billing clients for infrastructure, this distinction is even more important. Showback lets you validate your attribution model against actual cloud bills before you start invoicing. Nothing damages client relationships faster than a billing dispute over infrastructure charges you can't explain.

Kubernetes provides several mechanisms for cost attribution, each with different granularity and implementation complexity.

Namespaces are the most natural unit for cost attribution. In Convox, each application runs in its own namespace (formatted as rackname-appname), providing clean isolation between workloads. Namespace-level attribution captures all pods, services, persistent volumes, and other resources associated with an application.

Labels allow more flexible attribution across namespace boundaries. You can label resources by team, project, environment, or cost center. Convox automatically applies labels like app, service, release, and type to all deployed resources, giving you attribution data without manual configuration.

Node pools (or node groups in EKS terminology) let you isolate workloads to specific instance types. This is useful when certain applications have specialized requirements—GPU workloads, memory-intensive jobs, or compliance requirements that mandate dedicated infrastructure. Convox supports this through custom node group configurations and workload placement rules.

Storage classes define different tiers of persistent storage with varying performance and cost characteristics. Attributing storage costs by application is straightforward when each app uses its own persistent volume claims.

The challenge isn't accessing this data—it's correlating it with actual cloud costs and handling the shared resources that don't fit neatly into any attribution bucket.

Let's be honest about the limits of Kubernetes cost attribution. Some costs simply can't be attributed to individual applications without making arbitrary decisions.

Control plane costs: EKS charges $0.10 per cluster hour ($73/month) regardless of what runs on it. GKE charges for Autopilot based on pod resources, but standard clusters have similar fixed costs. This is true cluster overhead.

System services: CoreDNS, kube-proxy, CNI plugins, and the cluster autoscaler run on every cluster. In Convox, you also have the router, API, and other system services that enable the platform. These consume resources but serve all applications equally.

Monitoring and observability: Your metrics collection, log aggregation, and tracing infrastructure benefits all applications. Running Prometheus, Fluentd, or your APM agents has costs that don't belong to any single app.

Networking infrastructure: Load balancers, NAT gateways, and data transfer costs often can't be cleanly attributed. A shared ingress controller routes traffic to all your applications—how do you allocate its cost?

Idle capacity: If you maintain headroom for scaling or run minimum node counts for high availability, that capacity isn't attributable to current workloads. It's insurance.

Trying to force attribution of these shared costs at the pod level creates false precision. You end up with elaborate formulas that look accurate but are really just distributing arbitrary allocations. Sometimes the honest answer is "this is platform overhead."

Here's a cost allocation model that balances accuracy with implementation complexity. We'll use realistic numbers from a mid-sized deployment.

Scenario: A SaaS company runs 12 applications on a single EKS cluster. Their monthly AWS bill for the Kubernetes infrastructure is $15,000.

Step 1: Identify directly attributable costs

These are costs that clearly belong to specific applications:

In our example, let's say $10,500 (70%) of the monthly cost is directly attributable.

Step 2: Calculate shared platform costs

The remaining $4,500 (30%) represents shared infrastructure:

Step 3: Distribute shared costs proportionally

Allocate shared costs based on each application's share of directly attributable costs. If your API service accounts for 25% of directly attributable costs, it receives 25% of shared costs.

This creates a simple formula:

App Total Cost = Direct Costs + (Direct Cost Share % × Shared Costs)

Example for "api-service":

Direct costs: $2,625 (25% of $10,500)

Shared allocation: $1,125 (25% of $4,500)

Total: $3,750/monthThis model is defensible, easy to explain, and close enough to reality for most business purposes. It acknowledges that shared costs exist while distributing them fairly based on actual usage.

Several tools have emerged to solve Kubernetes cost allocation. Here's an honest assessment of each.

Kubecost is the market leader for dedicated Kubernetes cost management. It provides real-time cost visibility, allocation by namespace and label, and integration with cloud billing APIs. The free tier works for basic showback; enterprise features include chargeback reports, savings recommendations, and multi-cluster support. Implementation takes a few hours, and accuracy improves over time as it correlates cluster data with actual cloud bills.

OpenCost is the CNCF sandbox project that Kubecost contributed as an open-source foundation. It provides the core cost allocation engine without the enterprise features. If you have engineering capacity to build dashboards and reports on top of raw data, OpenCost is a solid foundation.

Cloud-native solutions vary by provider. AWS Cost Explorer with cost allocation tags, GCP BigQuery billing exports, and Azure Cost Management all provide cloud-level cost data. They work but require manual correlation with Kubernetes resources. The mapping from "EC2 instance i-1234567890" to "pods running my web app" isn't automatic.

Platform-level abstraction is often the simplest approach. When your deployment platform already provides application-level isolation—namespaces, labeled resources, dedicated databases—cost attribution becomes natural. You query costs by application name rather than trying to correlate pod-level metrics with cloud billing line items.

The right choice depends on your organization's complexity and precision requirements. For most mid-market companies, platform-level abstraction combined with the proportional allocation model described above provides 90% of the value at 10% of the implementation cost.

There's a temptation to pursue maximal precision in cost allocation—tracking every pod's CPU milliseconds, every byte of network transfer, every IOPS to storage. Resist it.

The implementation cost is high. Collecting, storing, and analyzing per-pod metrics at billing granularity requires substantial infrastructure. You need detailed metrics retention (expensive), correlation with cloud billing (complex), and reporting systems (time-consuming to build).

The accuracy gain is marginal. Moving from namespace-level to pod-level attribution might improve accuracy by 5-10%. But if your goal is helping teams understand their costs and make better decisions, namespace-level is sufficient. Nobody optimizes based on which of their twenty pods is 3% more expensive.

The maintenance burden is ongoing. Per-pod attribution breaks when pods are ephemeral (which they are), when resource requests don't match actual usage (common), and when cluster autoscaling changes node costs mid-day (always). You'll spend more time explaining anomalies than gaining insights.

When pod-level precision matters: There are legitimate cases. Multi-tenant SaaS where customers pay based on consumption. ML workloads where GPU-hours directly drive billing. Regulatory environments requiring detailed cost auditing. If you're in one of these situations, the investment in precision is justified.

For everyone else, start with application-level attribution. You can always add precision later if specific use cases demand it.

Based on your organization's characteristics, here's guidance on which approach to implement.

Small teams (1-5 apps, single cluster, <$5K/month cloud spend):

Use cloud provider cost reports plus manual namespace-level analysis. Monthly review is sufficient. Focus on right-sizing nodes and eliminating unused resources rather than precise attribution. A platform like Convox with built-in monitoring provides enough visibility for this scale.

Mid-market teams (5-15 apps, 1-3 clusters, $5K-$50K/month):

Implement showback with namespace-level attribution using the proportional allocation model. Consider Kubecost free tier for automated reporting. Establish monthly cost reviews per team. Use Convox's application-centric model to simplify attribution—each app is a deployment unit with known resources.

Enterprise teams (15+ apps, multi-cluster, $50K+/month):

Deploy Kubecost or equivalent for real-time visibility. Implement chargeback to team budgets. Use dedicated node groups for cost isolation of high-spend workloads. Consider FinOps practices with dedicated optimization roles.

Agencies billing clients:

Run separate Convox Racks per client for clean cost isolation, or use strict namespace separation with label-based attribution. Validate attribution against actual bills for at least two months before incorporating into client invoices. Build margin into shared costs rather than trying to allocate them precisely.

The most accurate cost attribution system in the world is useless if teams don't act on the information. Here's how to drive actual behavior change.

Make costs visible in context. Don't send monthly spreadsheets that get ignored. Surface costs in the tools engineers use daily. The Convox Console shows application status, resources, and scaling configuration in one place—extending this to include cost context (even rough estimates) makes optimization part of normal operations.

Tie costs to business metrics. "$500/month for our API" is abstract. "$0.02 per thousand API requests" is actionable. When teams understand unit economics, they can make informed decisions about optimizations.

Celebrate wins, don't just flag overruns. If a team reduces their infrastructure costs by 30% through better resource allocation, recognize it. Positive reinforcement drives more behavior change than cost center guilt.

Give teams control. Cost awareness without the ability to act is frustrating. Convox's scaling configuration lets teams adjust their resource allocation directly in their convox.yml:

services:

web:

build: .

port: 3000

scale:

count: 2-10

cpu: 256

memory: 512

targets:

cpu: 70When developers can see how their resource configuration affects costs and have the autonomy to change it, optimization becomes everyone's job—not just the platform team's problem.

Effective cost attribution depends on consistent resource tagging. Here's a tagging strategy that scales.

Mandatory tags for all resources:

app: The application nameenvironment: production, staging, developmentteam or owner: The responsible teamConvox automatically applies these through its deployment process. When you run convox deploy, all created resources inherit labels from the application configuration.

Optional tags for additional granularity:

cost-center: Finance-defined cost center codesproject: For cross-team initiativesclient: For agencies or multi-tenant deploymentsYou can add custom tags to AWS resources at the rack level using the tags parameter:

$ convox rack params set tags=cost-center=engineering,business-unit=platform -r productionThe key is consistency. A tag that's applied to 80% of resources is almost useless for cost attribution—you'll always be chasing down the untagged 20%. Automate tag application through your deployment pipeline to ensure compliance.

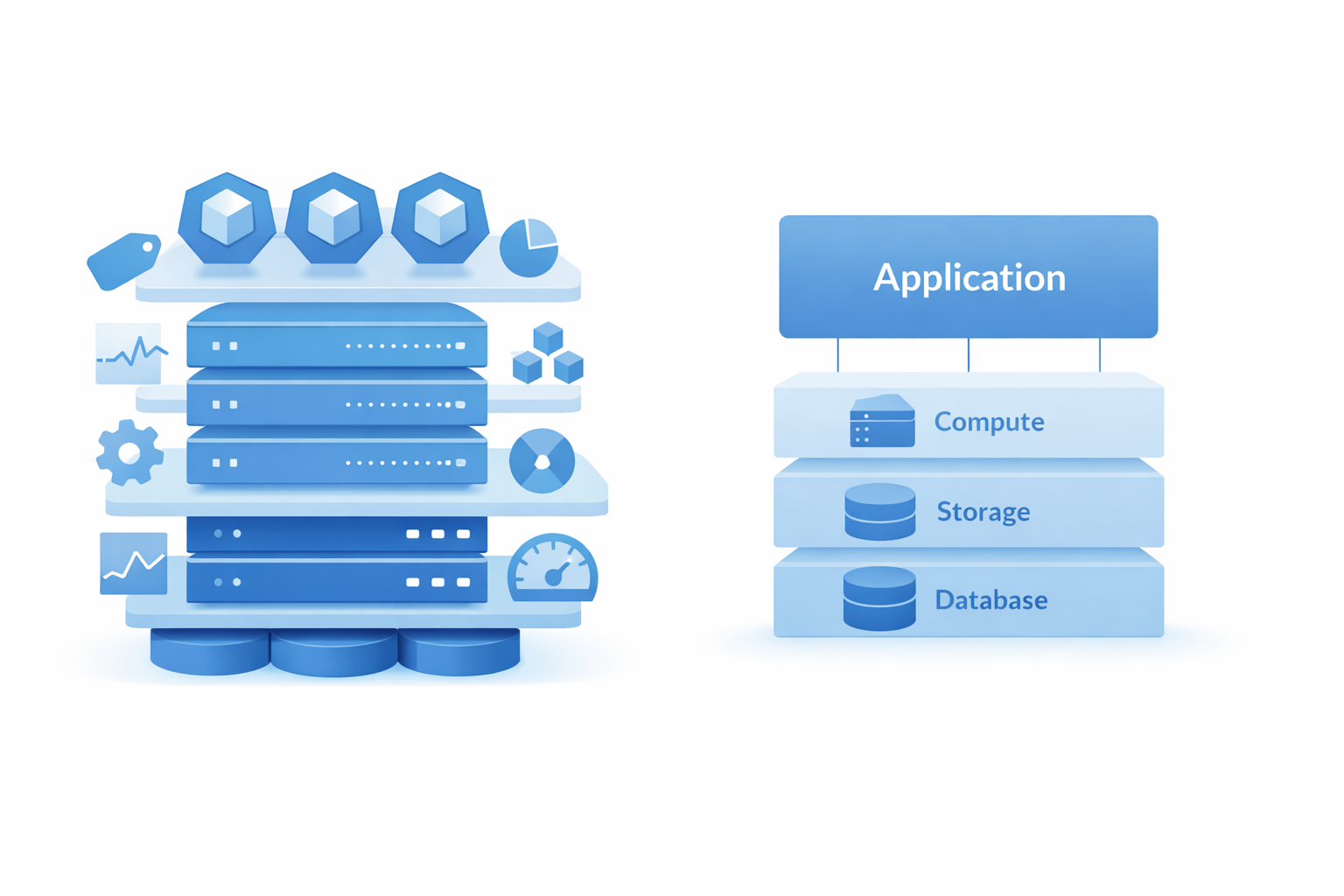

We've covered a lot of complexity—namespaces, labels, node groups, allocation formulas, tooling. Here's the secret: most of this complexity disappears when you operate at the right abstraction level.

Consider what Convox provides by default:

Each application is a namespace. No configuration required. Deploy an app, and it gets isolated into its own Kubernetes namespace with consistent naming.

Resources are application-scoped. When you define a database in your convox.yml, it's automatically linked to your application:

resources:

database:

type: rds-postgres

options:

class: db.t3.medium

storage: 100

services:

web:

build: .

port: 3000

resources:

- databaseThat RDS instance is clearly attributable to this application. No tagging required—it's provisioned through the app and appears in the app's resource list.

Scaling configuration is declarative. Resource allocation is defined in code, version-controlled, and visible. You can see exactly what resources each application requests and limits.

Multiple environments are multiple Racks. Running separate Racks for production and staging gives you natural cost separation by environment. Different Racks can run on different instance types, in different regions, with different configurations—all cleanly separated in your cloud bill.

This is the point: Kubernetes cost allocation is complex because Kubernetes exposes low-level primitives. A platform that provides application-level abstraction—where the unit of deployment matches the unit of cost attribution—eliminates most of that complexity by design.

Here's a practical timeline for implementing cost visibility in your organization.

Week 1: Establish baseline

Week 2: Build attribution model

Week 3: Validate and iterate

Week 4: Launch showback

After month one, evaluate whether to invest in more sophisticated tooling (Kubecost), proceed to chargeback, or maintain showback as the ongoing model. Most organizations find that monthly showback reports with the proportional allocation model provide sufficient visibility for budget management and optimization decisions.

Getting Kubernetes cost allocation right isn't about perfect precision—it's about providing enough visibility to make good decisions. The 70/30 rule (direct attribution for most costs, proportional allocation for shared infrastructure) works for the vast majority of organizations.

Start with showback before implementing chargeback. Accept that some costs are legitimately shared platform overhead. Focus on application-level attribution rather than pod-level precision. And choose tooling and platforms that make attribution natural rather than requiring constant maintenance.

The next time your CFO asks why cloud costs went up, you should be able to say: "The API service scaled to handle the product launch, the new ML pipeline is running daily training jobs, and we added a staging environment for the mobile team. Here's the breakdown." That's what good cost allocation enables—informed conversations about infrastructure investment, not defensive explanations of unexplained expenses.

Convox's application-centric model provides natural cost attribution without the complexity of manual Kubernetes resource correlation. Each app runs in its own namespace with automatic labeling, resources are application-scoped, and you can run separate Racks per environment for clean cost separation.

Whether you're running Convox Rack in your own AWS, GCP, or Azure account, or using Convox Cloud Machines for fully managed infrastructure, you get the cost visibility that comes from operating at the right abstraction level.

For enterprises with complex cost allocation requirements or agencies needing client billing integration, reach out to our team to discuss how Convox can simplify your Kubernetes cost management.